As you have actually most likely already realized with the previous extension, you really require to understand just how internet sites work to construct more complex scrapers. Web Scrape is still rather interactive as well as does not require coding, yet if you've never opened up the designer tools in your internet browser prior to, it may get complex quite promptly. Internet scuffing is just one of one of the most useful and also the very least comprehended methods for reporters to gather information. It's the thing that aids you when, in your online research, you encounter info that certifies as data, however does not have a convenient "Download" switch.

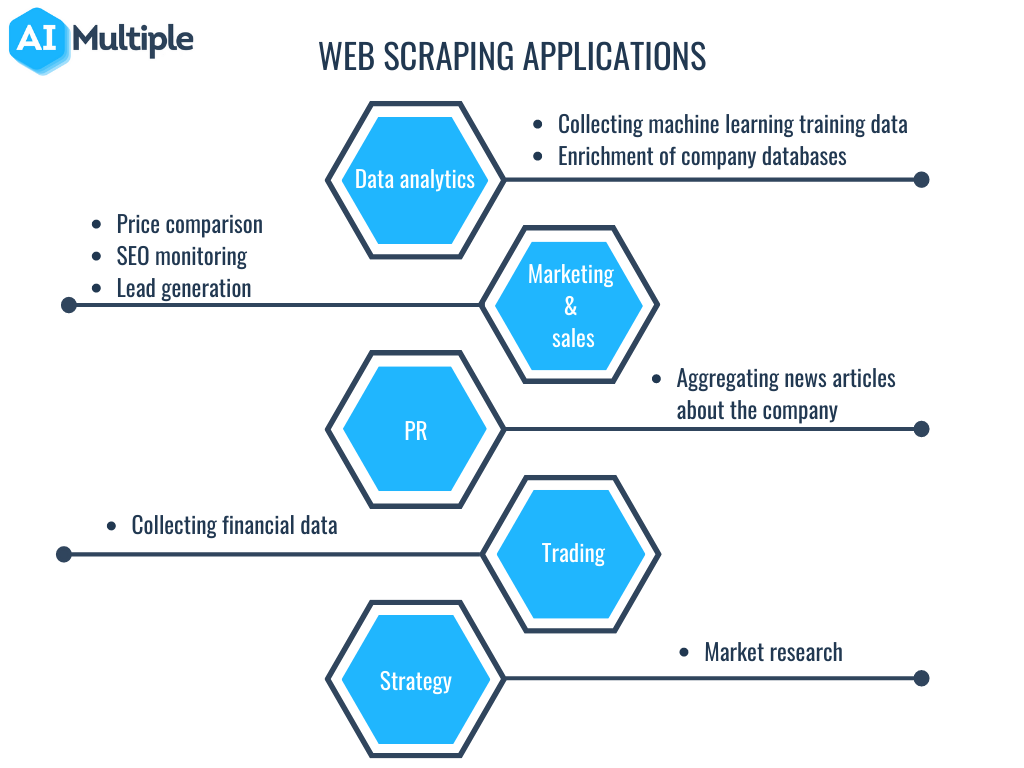

What can data scratching be made use of for?

Afterwards, make use of information scrapes which can go across via pagination to find item listings within a classification. User-agent is a demand header that tells the web site you are seeing regarding on your own, specifically your browser as well as OS. This is utilized to maximize the content for your set-up, yet internet sites additionally utilize it to determine robots sending tons of demands-- even if it changes IPS. Now, we will tell ParseHub to click on each of the products we've chosen and also remove additional information from each web page. In this case, we will remove the product ASIN, Screen Size as well as Screen Resolution. The data we are scratching is being returned as a thesaurus.

Writing The Evaluation Scuffing Feature

This will enable us to access the page's HTML content and return the page's body as the result. We after that close the Chrome circumstances by calling the close technique on the chrome variable. The resulted work should consist of all the dynamically produced HTML code. This is how Puppeteer can aid us fill vibrant HTML material.

Should you block ChatGPT's web browser plugin from accessing ... - Search Engine Land

Should you block ChatGPT's web browser plugin from accessing ....

Posted: Thu, 30 Mar 2023 07:00:00 GMT [source]

Then, based upon the concurrency limitation of our Scraper API plan, we require to adjust the variety of simultaneous demands we're licensed to make in the settings.py file. The number of demands you may make in parallel at any offered time is described as concurrency. The quicker you can scrape, the extra simultaneous demands you can create. You've developed the job's overall structure, so currently you prepare Learn here to begin servicing the spiders that will certainly do the scraping. Scrapy has a range of spider types, however we'll focus on one of the most preferred one, the Generic Spider, in this tutorial.

Export Scraped Item Data To A Csv Data

Keep points as well unclear and also you'll wind up with much excessive data (as well as a headache!) It's finest to spend time in advance to create a clear strategy. This will certainly save https://www.slideserve.com/gundanknze/internet-scratching-for-marketing-research-in-2023 you great deals of effort cleaning your data over time. However there's even more to it than just executing code and hoping for the most effective! In this area, we'll cover all the steps you require to adhere to. The specific technique for carrying out these steps depends upon the tools you're making use of, so we'll concentrate on the (non-technical) essentials.

- For that reason, the very first thing a web scrape does is send out an HTTP demand to the website they're targeting.

- You would require to utilize the urljoin approach to analyze these links.

- Nonetheless, when people refer to 'web scrapers,' they're usually talking about software applications.

- If there's information on a site, after that in theory, it's scrapable!

However, it ought to be kept in mind that internet scraping also has a dark underbelly. Poor gamers usually scrape data like bank information or various other personal info to conduct fraud, scams, copyright burglary, as well as extortion. It's good to be familiar with these dangers before starting your very own web scuffing trip. Ensure you keep abreast of the lawful guidelines around internet scuffing.

The primary advantage of using pandas is that analysts can perform the entire information analytics process using one language. After removing, parsing, and also collecting the appropriate data, you'll require to keep it. You can instruct your algorithm to do this by including extra lines to your code. Which style you select is up to you, https://www.instapaper.com/read/1614693369 yet as mentioned, Excel formats are one of the most common. You can also run your code through a Python Regex module (short for 'routine expressions') to draw out a cleaner set of information that's simpler to check out.

Broaden the brand-new command you've produced and after that erase the URL that is also being removed by default. On the left sidebar, click the and also(+) sign next to the product selection and select the Loved one Select command. The rest of the item names will be highlighted in yellow. Below is the total working Python manuscript for detailing multiple PlayStation offers.